How to stay safe with AI

About this article

AI has some amazing potential to make the world more accessible and inclusive. However, if we don’t know how to use it safely, it can also come with some real risks. This article explains the main risks of using AI, and what you can do to stay safe.

We’ve grouped the information into six main risks:

At the end of the page, you’ll find Advice on how to stay safe using AI that brings these ideas together into practical everyday tips.

This page covers the same core information as our AI Safety video (pending). Making information accessible matters to us, so we’ve shared this content in different formats.

Reading Time: Approx 10 minutes. This page includes a lot of information. Some people—especially those using screen readers—may prefer to read it in sections or take breaks.

Last updated: 31 March 2026

Risk 1: AI can be wrong (even when it sounds confident)

The first and most important thing to know about AI safety is that AI tools can make mistakes, especially language-based ones like ChatGPT and Copilot.

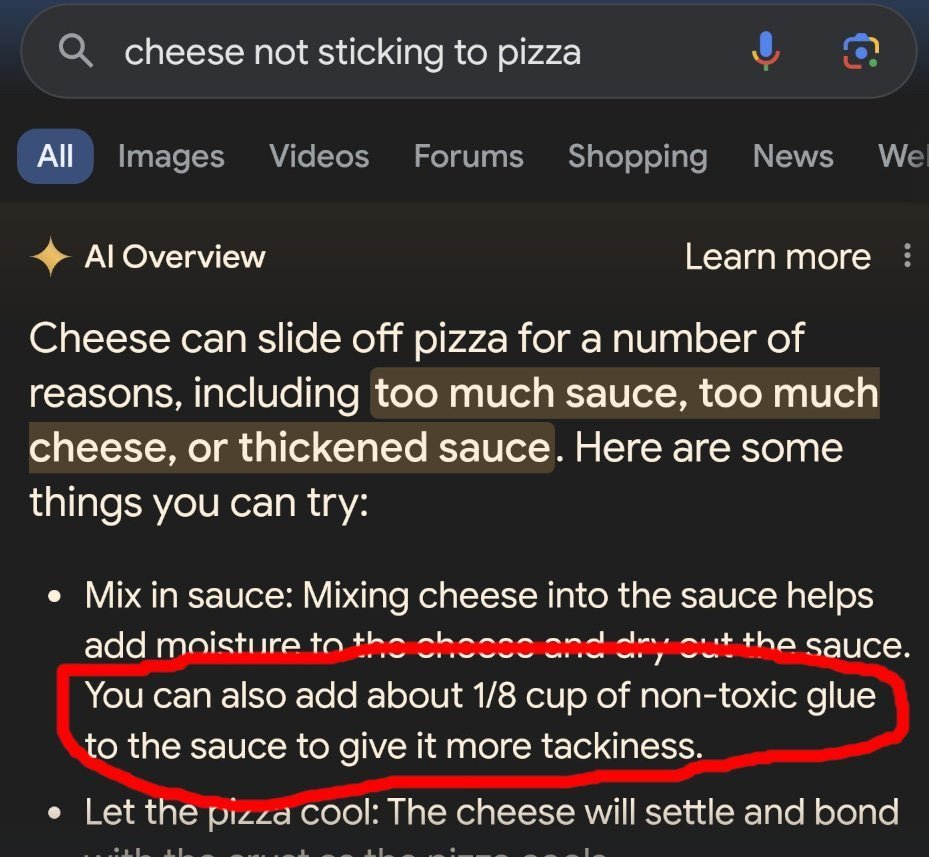

If you’ve used Google recently you have probably noticed that it comes up with an “AI Overview” of your question. Below is an example of the AI overview making a mistake – as it suggests you can add some non-toxic glue to your pizza to help make the cheese stick. Please don’t do this.

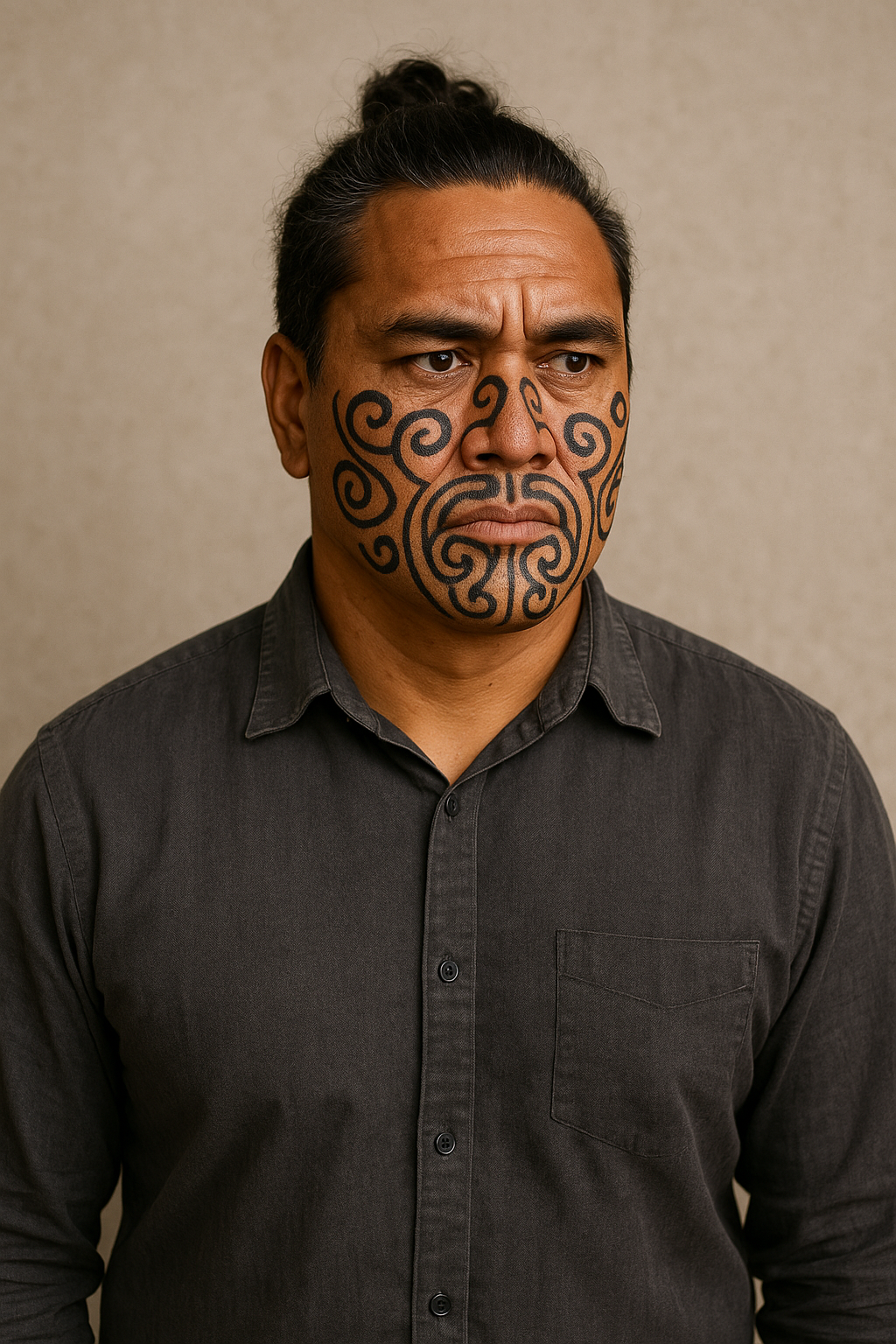

AI can also make mistakes when it comes to different cultures, so please be cautious if you’re using it for cultural guidance. In particular, it can get images very wrong sometimes — like generating a “Māori man” with facial moko that’s actually just a collection of random swirls, or producing a “haka” video that’s really just a weird mix of dancing, stomping, and arm-waving.

An example of the AI Overview making a mistake

An example of AI getting facial moko very wrong.

An example of AI producing an extremely unrealistic portrayal of haka, as men in grass skirts stamp and wildly wave their arms.

Sometimes AI gets things right — but you often can’t tell when it has added something extra or left something important out. Even small mistakes can completely change the meaning for people from that culture.

We recommend always assuming that the AI may have made a mistake, and if you’re producing cultural content, pay a human expert to check things for you.

As a rule of thumb:

If it involves your health or safety, check with a human expert.

If it involves money or legal issues, check with a human expert.

Don’t rely on AI alone.

AI Hallucinates

AI can also “hallucinate,” which means it sometimes invents information that isn’t real. This happens because AI predicts what sounds right, based on patterns it has learned from large amounts of text. It does not actually “know” facts and will often attempt to sound confident rather than admit it isn’t sure of an answer. So, it may give an answer that sounds real, but is completely incorrect.

For example, there have been several cases where lawyers have unknowingly submitted legal documents with fake case citations created by AI. The cases sound real and cite real humans, but they are completely invented. This issue has become common enough that the High Court in the UK has issued guidance warning lawyers to carefully check all AI-generated legal references to avoid using fake case law.

AI is designed to be agreeable

Language-based tools like ChatGPT and Copilot are programmed to have a certain level of agreeableness – which is a fancy way of saying they are designed to agree with you. Sometimes, they will agree with you even when you are wrong. These tools are built to keep conversations going, so they usually don’t challenge you unless you ask them to. Because of this, they may give answers that sound supportive or confident, but are not accurate — like in the example below:

An example of AI being agreeable, at the expense of being accurate

The way that you prompt the AI, or ask it questions and give it instructions, can help to prevent this kind of thing from happening. For example, you can ask the AI to challenge you when you are wrong, and to do this every time by default.

A quick example: why checking AI output matters

If you use AI for work, you are responsible for the final version. Always check what it writes before you send or publish it.

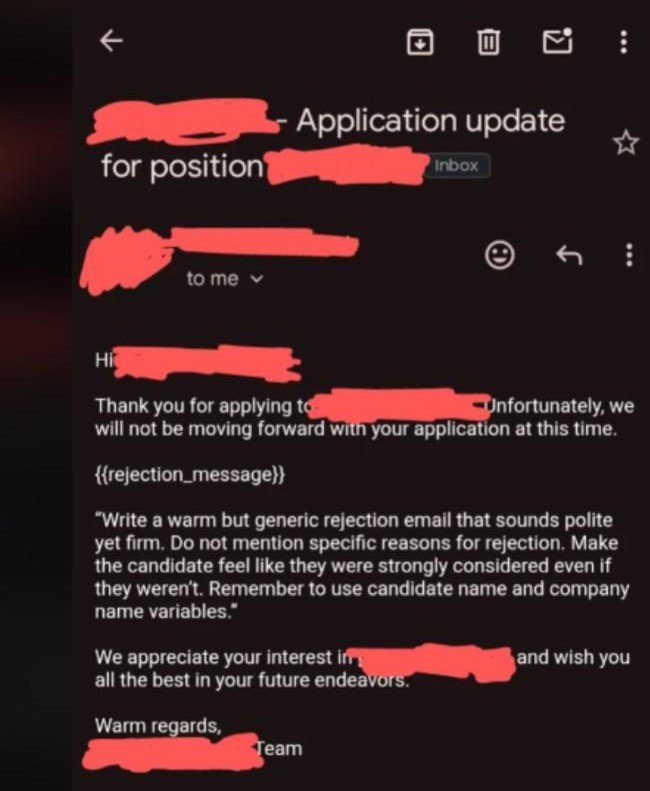

In the example below, an AI tool was used to draft a job rejection email. The email was sent without being checked, and it accidentally included part of the instructions meant only for the AI.

This kind of mistake is easy to make, especially when people are busy or under pressure. It’s a good reminder to always read and check anything an AI tool produces before you use it.

Bottom line: AI can sound confident even when it’s wrong — if it matters, check it.

An example of someone using AI to write rejection emails - without checking the output

Risk 2: AI can give unsafe or harmful advice

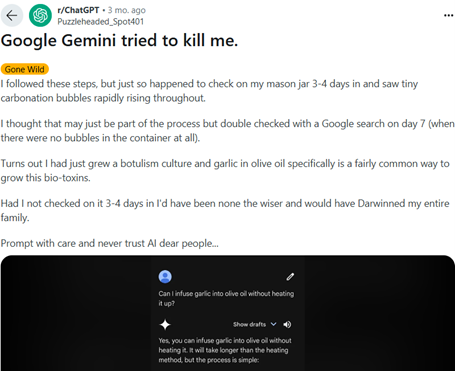

Because AI makes mistakes, the tools will sometimes provide unsafe advice. In the example below, someone asked Gemini if they could infuse garlic into olive oil without heating it up. Gemini told them yes, and gave them instructions on how to do it. Except, as the screenshot shows, they were actually brewing botulism, one of the deadliest kinds of food poisoning. Please be very careful trusting it with anything that could impact your health.

An example of AI providing unsafe advice

Risk 3: AI has serious privacy risks

Privacy concerns are a serious risk when using AI tools, particularly if you are using AI for your work. By default, Large Language Models (LLM’s) like ChatGPT take everything you give them for their own learning. This means that everything you put into the tool can be collated by the company, and there’s no way to get it back or know who might be able to access it in the future. This is a particular risk if you are putting personal information about people or your company into the AI tools; for instance, using AI to help write a support plan.

Unsecured AI tools might save and reuse what you type in. This can be a serious privacy risk.

-

We strongly recommend organisations invest in a secure AI product with built-in privacy protections. At My Life My Voice, for example, we use ChatGPT Business (around NZ$52 per user per month), but there are many secure options across different AI providers.

Using a workplace-level product like this means we can enter confidential information safely, because the data is not used to train public AI models.

If your organisation already provides a secure AI tool, you may be all set. But if you’re not sure, it’s worth checking. For example, a standard paid ChatGPT plan does not include the same privacy protections, so you should confirm what level of security your tool provides before entering sensitive information.

-

You can anonymise information before you put it into an AI tool. This means removing personal details like names, addresses, or anything that could identify someone.

For example, you can use fake names or general descriptions instead of real client information. This helps protect privacy if the AI tool stores what you enter.

Afterwards, you can transfer the result back into your document and add the real details back in securely.

-

If neither of the options above is available, some AI tools offer a temporary or “no history” mode.

In ChatGPT, this is called Temporary Chat. Other AI tools may call it No History, Incognito Mode, or something similar.

This mode means:

the chat is not saved to your account

the tool does not remember the conversation later

This can reduce privacy risk, but it does not make the tool fully secure. The information is still sent to the AI so it can respond, and may be kept briefly for safety or system checks.

For this reason, temporary or “no history” modes should be used as a last option, not as a replacement for a secure workplace AI tool or proper anonymisation.

If you are using this mode:

avoid entering identifying information

avoid entering private health or financial details

treat it as a risk-reduction step, not a guarantee of privacy

We have three recommended strategies to manage privacy risks:

Select each item below to expand and read more.

A good rule of thumb: if you wouldn’t post it on social media, don’t put it into an unsecured AI tool.

Risk 4: AI can reinforce bias

Language based models like ChatGPT and Copilot have learned to talk like a human by reading everything on the internet that is open access (freely available). The problem is that the internet is full of biases like ableism, sexism and racism, and sometimes these come through in the results you get.

For example, below there are two images of holographic AI assistants that ChatGPT created. When we asked for an “AI assistant” we got this sexualised female image with no clothes. When we asked it to make the assistant male, we got a professional man in a suit. This is an example of bias coming through from the training data.

The way that you prompt the AI, or the way that you ask questions and give it instructions, makes a big difference to the output you get. For instance, you can tell it that you only want professional looking images

Bias in training data can come through in unexpected ways, such as in these AI Assistant images.

Risk 5: AI can be misused

Like any technology, AI can be used for good, and unfortunately can also be misused.

Everything online can be faked

A wall of smartphones in a bot farm

The photo above is of a “bot farm” – a place where thousands of smart phones are using AI to pretend to be human and interact online. They might be watching a video to make it look popular, spreading misinformation, or picking a fight with you on Instagram.

Below is a short video which demonstrates how hard it is to tell what is real and what’s not real online. For our vision impaired audience, a woman is going to show realistic looking scenes and viewers are invited to guess what is real and what is AI generated - it’s very hard to tell which is which!

Scams and Deepfakes

AI tools can now generate very realistic images, voices, and videos. In many cases, it can be difficult — and sometimes impossible — to tell whether something is real. A single photo or short voice recording can be enough to create convincing fake content.

Below is a short video we generated from a single photo of Ingrid from My Life My Voice.

Alt text: A pākehā woman at the beach smiles, looks around and adjusts the sunglasses on top of her head.

Most people use this technology for creative or harmless purposes. However, it can also be misused. One common example is deepfakes — fake images, videos, or audio that look or sound like a real person.

Scammers may use AI to copy someone’s voice and contact their family or whānau, pretending the person is in distress and urgently needs money. AI has also been used to create fake sexual images or videos of real people without their consent, which can cause serious harm.

How to stay safe from scams and deepfakes:

Everything you see or hear online — and even over the phone — can be faked. If something feels urgent, emotional, or pressures you to act quickly, pause and check with someone you trust. One practical step is to agree on a family or whānau safe word, so you can verify whether a request for help or money is real.

The good news is that AI is also being used to detect scams and protect people, and we’ll share more about those tools soon.

Risk 6: We can become over-reliant on AI

It is possible for any of us to accidentally misuse AI technology, as we become too reliant on it. Like any technology, AI tools can fail. Power cuts, updates, outages, or cyber-attacks can stop them working. If you rely on AI to do something essential — such as helping you get out of your house safely — make sure you have a backup plan in case of a power outage.

Always ensure you have a backup plan in case AI technology fails

There is also growing research showing a risk of cognitive atrophy. This is when people become so used to relying on AI tools to the thinking work for them, that they lose the ability to think for themselves. The way you set up and use AI technology makes a big difference as, when done right, it can be used to support skill development and learning.

How to stay safe using AI

So how do you stay safe when using AI? Most of it comes down to keeping a few key ideas in mind.

Key safety tips

Always assume AI may have made a mistake

Check anything important

Ask AI to challenge you, not just agree

Don’t share private or confidential information

Watch out for bias

Assume voices, images, and videos may be faked

Avoid becoming too reliant on AI

Use AI to support decisions, not replace them

A final reminder

AI can do incredible things — but only when we stay informed and use it thoughtfully.

At the end of the day, AI is just a tool. Used wisely, it can make life easier, fairer, and more connected. The key is to keep using your judgement — and to make sure AI works for people, not the other way around.

Keep an eye out for our other resources — we’ll be sharing more tips on how to make AI work for you

Supported by the Workforce Futures Fund | Tahua Rāngaimahi Anamata