Introduction to AI

(Artificial Intelligence)

About this article

You have almost certainly been hearing a lot about AI recently. This article explains what AI is, where you might already be encountering it, and why it matters — especially in everyday life, work, and accessibility.

This page covers the same core information as our Introduction to AI video (pending). Making information accessible matters to us, so we’ve shared this content in different formats.

Time to read: approx. 6 minutes. This page includes a lot of information. Some people—especially those using screen readers—may prefer to read it in sections or take breaks.

Last updated: 13 March 2026

So first up — what is AI?

AI is short for artificial intelligence.

It’s a type of technology that can learn from information and carry out tasks on its own.

AI isn’t a single technology — it’s an umbrella term that covers many different tools. Some AI technologies exist in the physical world — like robot vacuum cleaners or humanoid robots (robots that look like humans). Others are computer programmes, including chatbots, medical tools that can analyse images such as MRI scans, and so much more.

You may already be using AI without realising it. For example:

When your phone unlocks using facial recognition

When your email filters spam into a separate folder

When Netflix or Spotify recommends shows or music you might like

All of these are examples of AI that have been around for a while. These earlier AI tools were usually designed to do one job really well.

What’s changed in the last few years is the rise of newer AI tools that are significantly more powerful and capable and can do many different tasks in one place. Instead of just recognising faces or recommending music, some AI tools can now help with writing, creating images, and even providing visual descriptions of the real world in real time - all in the same tool. That shift is why AI has suddenly become much more visible, and why people are talking about it so much.

The most common type of AI you’ll come across:

language-based AI (LLMs)

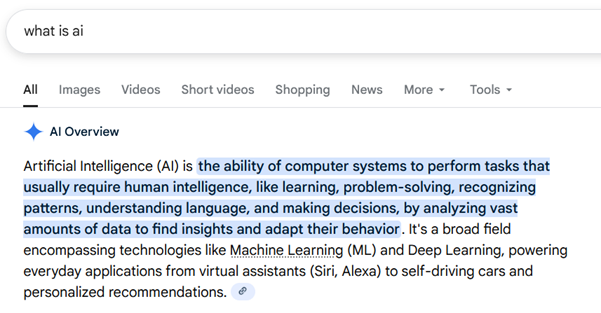

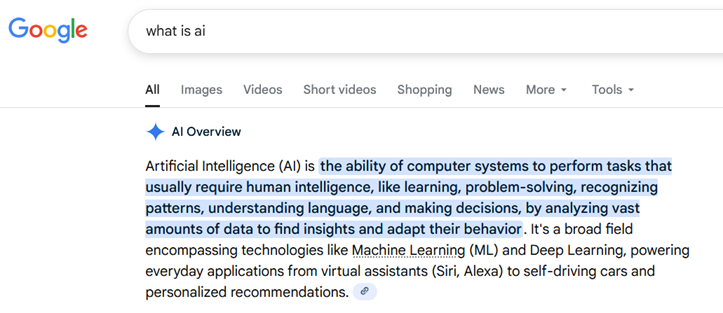

The most common type of AI you have probably been hearing about is called a Large Language Model, or an LLM for short. If you’ve used Google recently, you’ve probably already seen one. When you search for something and an “AI overview” appears at the top of the page, that’s an LLM in action, like in the screenshot below:

An LLM is a tool that can read and respond to what you type — a bit like having a conversation with a person. You can ask it questions, get help with writing, or brainstorm ideas, and it will respond in plain language.

Below is a video showing someone using one of the most well-known LLMs, ChatGPT, for a simple task.

Alt text: This video shows someone asking ChatGPT “What is an LLM”. ChatGPT then responds with an explanation about Large Language Models.

Many LLMs can do more than just typing. Some also have voice and video features, so you can talk to them out loud and show them what you’re looking at in real time.

For example, here’s a video of our vision-impaired colleague James using ChatGPT’s video feature for the first time to read a café menu:

LLMs can also generate images and videos. Watch out for our upcoming article and video called Introduction to LLMs to learn more.

Focus less on paperwork, more on people

The dramatic growth in AI is starting to reshape everyday life and work.

In the support sector, AI can help with administrative tasks, but it can also support accessibility — like making information easier to understand or helping people communicate and access services. Used well, AI can free up more time for human connection — so support workers and organisations can focus less on paperwork, and more on people.

The reality is that AI is now unavoidable. That’s why learning about AI matters – because understanding what it can do, and what it can’t, helps us use it well and avoid harm

A warning – AI makes mistakes

As extraordinary as this new technology is, there are also serious risks that you need to be aware of. While some AI technologies are designed to sound and behave in very human-like ways, it’s really important to remember that AI is not human.

AI doesn’t have feelings or values, and it doesn’t truly understand meaning or context in the way that people do. AI technologies are advanced computer programmes that work by recognising patterns in large amounts of data. Because of this, AI sometimes gets things wrong. It can misread information, misunderstand context, or make strange assumptions. While some mistakes are harmless or even funny, others can be misleading or unsafe.

The image and video below show examples of AI doing things that a human would be very unlikely to do.

AI fail: An example of AI producing a very strange image of a wheelchair accessible bus

Alt text: A man in a wheelchair holds a white cane between two hands. The wheelchair is moving forward without him propelling it. Then the wheelchair morphs into him walking

Important:

Because AI doesn’t actually understand what it’s saying, it can sometimes give answers that are wrong, misleading, or unsafe — even when it sounds very confident. For example, telling someone to mix cleaning chemicals that create toxic fumes. That’s what makes AI risky: it can sound like it knows what it’s talking about, even when it’s wrong.

AI should be used as a tool to support people, not as a replacement for human judgement, relationships, or decision-making.

We strongly recommend readers also check out our AI Safety article or watch our AI Safety video (coming soon…).

Te Tiriti o Waitangi and te ao Māori – a lens for thinking about AI

Once we understand what AI is, and why it needs care, the next question is responsibility. At My Life My Voice we are guided by Te Tiriti o Waitangi and we have been working with Pou Tikanga, our cultural advisory group, to understand AI – including its ethical use and implications, with a bicultural lens.

In te ao Māori (the Māori worldview), knowledge is a taonga (treasure). It is not just information, but something that lives in people, places, relationships, and practices, and comes with responsibilities about how it is used and shared.

One way of thinking about knowledge is through wharekura — houses of learning that hold many baskets of knowledge. These baskets contain different kinds of understanding, including knowledge that can help humankind and knowledge, such as makutu, that can cause harm.

We can think about AI in a similar way. AI tools draw on vast amounts of human-created information and can appear to hold many baskets of knowledge at once — helpful, harmful, and misleading. But AI does not understand where that knowledge comes from, how it is connected, or when it is appropriate to use. That responsibility sits with us – with people.

Much like our ancestors who navigated the vast Pacific by reading the stars and the currents, we must approach AI with a shared sense of stewardship, ensuring that these new digital tools are steered by human wisdom and the values of our community.

A note on te reo Māori and tikanga

Some AI tools can help with very limited te reo Māori tasks, such as checking spelling or drafting short, simple sentences. However, they have limited understanding of tikanga and do not recognise differences between iwi and hapū, and they can be confidently wrong.

A helpful way to think about this is through a pōwhiri example. AI can give general information about what a pōwhiri is and outline common stages, but it does not know the specific kawa (protocols) of a particular marae or how tikanga is practised within that community.

In the same way, AI does not understand whakapapa or the lived meaning of tikanga. It can support learning, but it cannot be trusted as an authority.

Image credit: By US Embassy New Zealand - https://www.flickr.com/photos/46907600@N02/53074344700/

Why learning about AI matters

AI is one of the most powerful opportunities of our time. It's natural to feel a bit daunted by it — many people do. Used well, AI has the potential to:

Break down barriers

Make work and daily life more accessible

Free up time for human connection and care

That's why disabled people and our allies need to be part of shaping how AI is used — building the skills and confidence to use it safely, ethically, and on our own terms.

We also need to help shape these systems from the beginning, so they reflect our lives and communities — because AI can carry bias, and fairness doesn’t happen by accident.

This is a pivotal moment. We don't have to sit back and let technology happen to us. Together, we can shape it, guide it, and make sure it supports better lives and futures for disabled people.

We’d love you to join us on this journey.

👉 Next step: Read our AI Safety article or watch our AI Safety Video (coming soon) to understand the risks and how to use AI responsibly

👉 Explore our other AI resources

👉 Want to stay updated? Register here

Supported by the Workforce Futures Fund |Tahua Rāngaimahi Anamata